Last updated: 27th November 2019

This article will walk through an overview of the Direct Connect, the concepts of Virtual Interfaces and on how to leverage Direct Connect to access a VMware Cloud on AWS SDDC.

We can leverage the AWS Direct Connect (DX) to connect your DC to your VMware Cloud on AWS SDDC to benefit from the DX’s high bandwidth, reduced latency and egress data charges.

Many thanks to Humair Ahmed for many of the network diagrams and detailed explanations and to Steve Seymour for his excellent re:invent deep dive session (NET403).

Direct Connect Principles

AWS Direct Connect is essentially a private circuit from the DC to the AWS DCs.

AWS Direct Connect provides a dedicated private connection into AWS and leverages the concept of private and public VIFs.

It will be important to understand the difference between Public Virtual Interface (Public VIF) and Private Virtual Interface (Private VIF) and this will be explained in details in a following section but first let’s review the primary benefits of a Direct Connect:

- Increased bandwidth throughput

- Decreased latency

- A more consistent network experience than Internet-based connections

- Reduced egress data charges

The Direct Connect is often abbreviated to DX. Note that Azure has a similar technology called Express Route.

Direct Connect uses BGP to exchange routes and leverages private and public virtual interfaces for BGP peering.

BGP is the only routing protocol permitted to exchange routes between the on-premises router and the AWS Direct Connect Interface. We will have a reminder about BGP in a section below. First, let look at the Direct Connect Ports and Speeds.

Direct Connect Ports and Connections

Direct Connect physical ports can either be 1G or 10G link but you can request a subset of that bandwidth or multiples of these. You can contract them in two different ways:

Dedicated Ports

- Full port speed reserved to customers

- Ordered from AWS

- Supports multiple virtual interfaces

Hosted connections

- Provided on a partner interconnect

- Hosted connection has a defined bandwidth and VLAN – typically a partner used to offer a slice of the 1G pipe, such as 50Mbps, 100Mbps, etc.. to an end-customer. So the partner would essentially break down a 1 or 10G pipe into smaller chunks for multi-customers. That changed on 19th March 2019 with this announcement.

- Now selected AWS Direct Connect partners can offer higher capacity hosted connections (1, 2, 5 or 10 Gbps).

- Hosted connection supports a single virtual interface

The last point above is important in the context of VMware Cloud on AWS – as a hosted connection only supports a single VIF and we need to attach a dedicated VIF for VMC on AWS, a hosted connection will only work for either native AWS or VMC, not both. This limitation does not apply to dedicated ports as we can create multiple VIFs on top of a dedicated port.

Direct Connect Locations have resilient and diverse paths back to AWS backbone.

LAG – Link Access Group

- Customers can bundle multiple 1G or 10G ports into a single managed connection.

- Traffic will load-balance across these links, per flow.

- You can have up to 4 links in a LAG.

Key terminology to understand:

Connection = physical circuit

Virtual Interface (VIF) = logical interface on AWS virtual router

Direct Connect Routing

Direct Connect uses BGP to exchange routes and leverages private and public virtual interfaces for BGP peering.

BGP is the only routing protocol permitted to exchange routes between the on-premises router and the AWS Direct Connect Interface.

BGP Reminder

BGP (Border Gateway Protocol) is an exterior routing protocol designed to exchange prefix information between different autonomous systems.

BGP neighbors build peering connections over TCP (port 179) and exchange routing information (prefixes).

In BGP, a set of routers inside a single administrator authority form an Autonomous System (AS).

BGP uses Autonomous System Number (ASNs) which is a number between 1 and 64,511 (public) and 64,512 and 65,535 (private) assigned to an AS for the purpose of identifying a specific BGP domain.

In the picture above, Router A will build a BGP peering session with Router B.

- Router A will tell Router B: “Hi, Router B. My local networks in Autonomous System 64512 are 10.10.0.0/16 and 10.20.0.0/16. If you want to reach these routes, just send the traffic to 192.168.10.1 and I will send it to its destination. What routes do you know?”

- Router B will tell Router A: “Hi, Router A. My local networks in Autonomous System 65001 are 10.30.0.0/16 and 10.40.0.0/16. If you want to reach these routes, just send the traffic to 192.168.10.2 and I will send it to its destination. What routes do you know?”

In the context of a Direct Connect connection, there will be an external BGP (eBGP) session between two different ASNs: one belonging to the customer and one belonging to AWS.

Direct Connect sessions in VMware Cloud on AWS environment now use BGP Private ASN 64512 for the default local ASN. The local ASN is editable and any private ASN can be used (64512 to 65534). If ASN 64512 is already being used in your on-premises environment, you must use a different ASN.

Virtual Interfaces

Direct Connect routing connectivity can be established with a Public VIF or a Private VIF.

Public Virtual Interface (Public VIF)

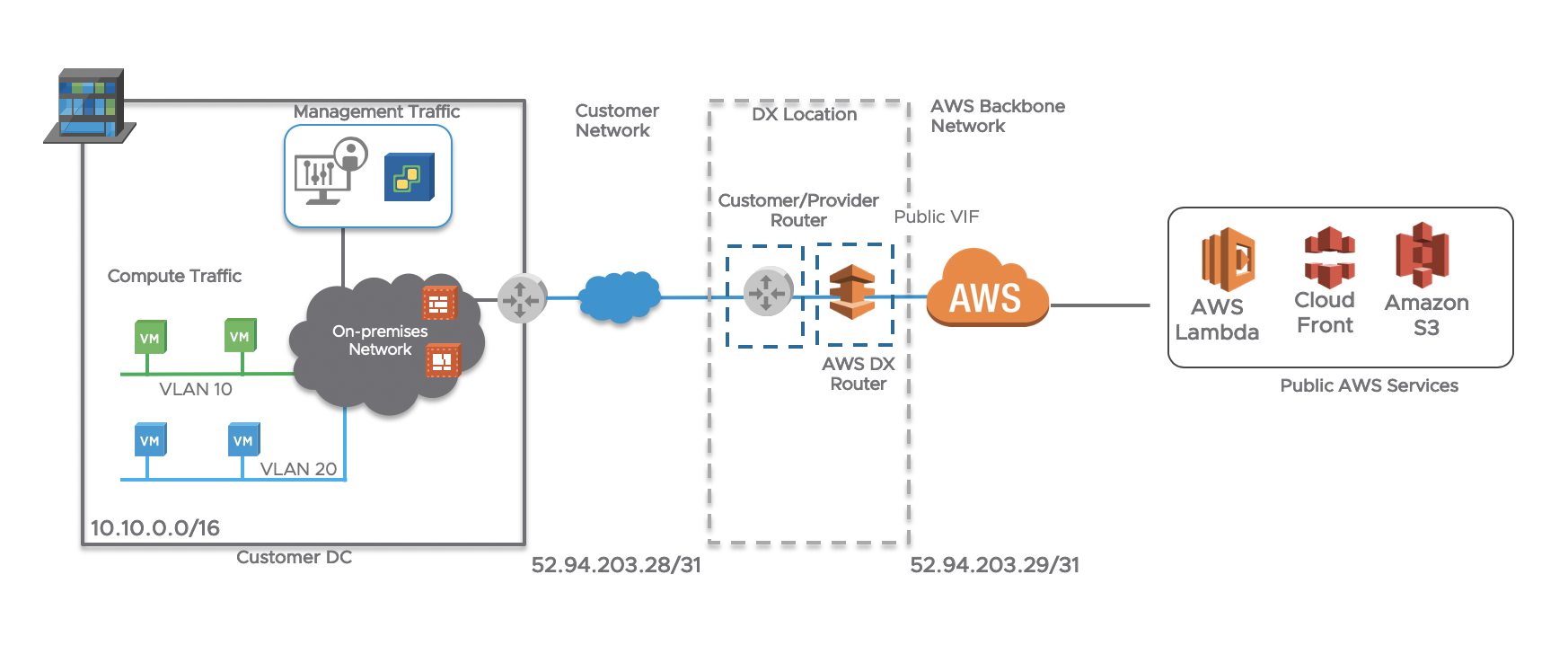

By building a BGP session to a Public VIF, the on-prem router will learn the all the public prefixes that belong to AWS (over 2000 and counting). This means that a user/server on-prem will access ‘public services’ (such as an S3 bucket) via the Direct Connect instead of going over the Internet.

Here are some more characteristics of a public VIF:

- Private dedicated connection to AWS backbone

- Uses Public IP address space and terminates at the AWS region-level

- Reliable connectivity with dedicated network performance to connect to AWS public endpoints: (S3, DynamoDB)

- Customers receive Amazon’s global IP routes via BGP, and they can access publicly routable Amazon services

Here is the logical view:

Private Virtual Interface (Private VIF)

By building a BGP session to a Private VIF, the on-prem router will learn all the private prefixes within a VPC (or within VMware Cloud on AWS). This means that a user/server on-prem will access services that reside within a VPC (EC2, RDS, etc…) or within VMware Cloud on AWS via the Direct Connect, instead of going over the Internet.

Here are some more characteristics of a private VIF:

- Private dedicated connection to AWS backbone

- Uses Private IP address space and terminates at the customer VPC-level

- Reliable consistent connectivity with dedicated network performance to connect directly to customer VPC

- AWS only advertises entire customer VPC CIDR or VMC Networks via BGP

Private VIF

The Private VIF enables customers to receive and advertise only the private routes they need to share and is the ideal model in the context of VMware Cloud on AWS.

In earlier release of VMware Cloud on AWS, certain restrictions meant that we had to use Public VIFs to pass some of the traffic between the customer DC and VMware Cloud on AWS. These restrictions have now been lifted.

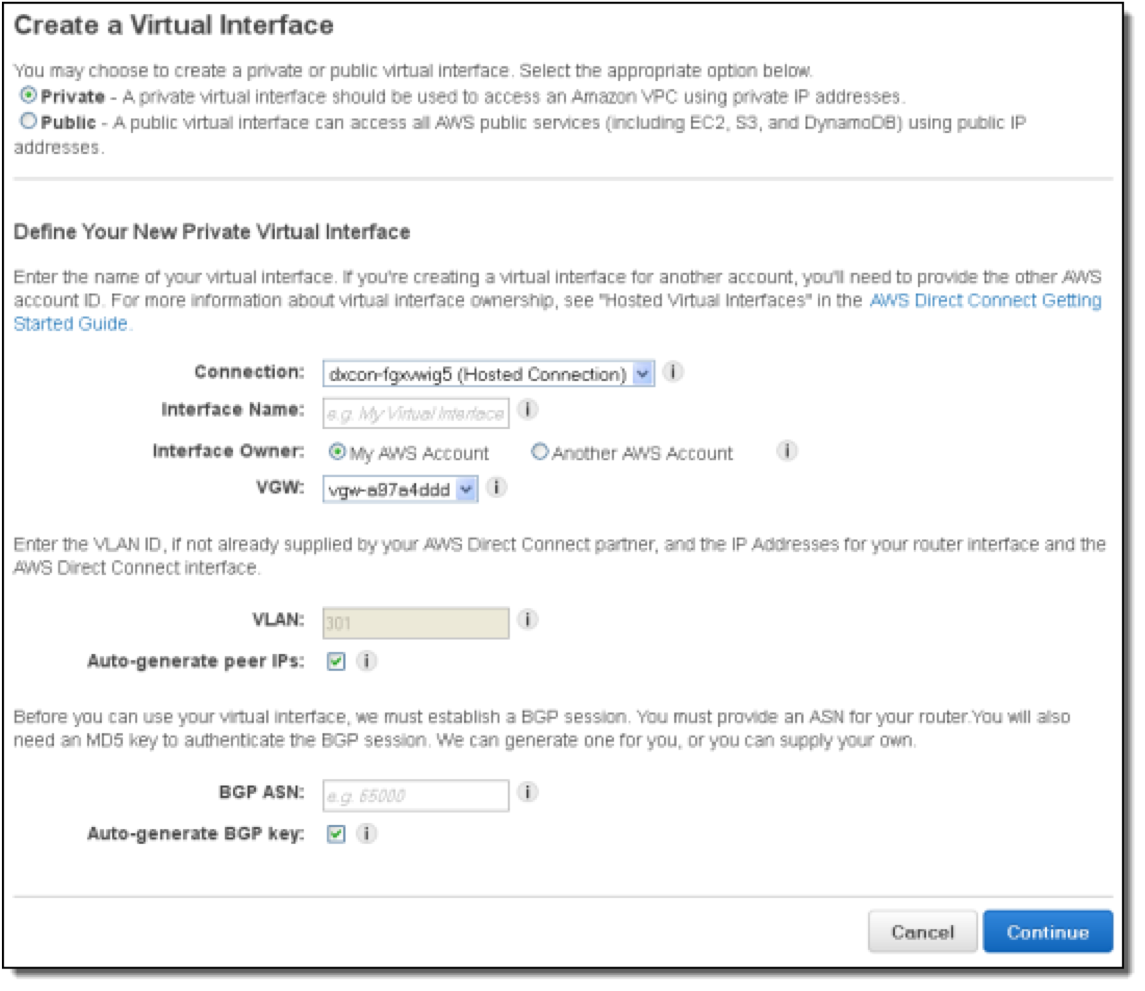

How to configure a Private Virtual Interface?

- Go to the AWS Console

- Go to “Direct Connect”

- Go to “Virtual Interface” Section and create virtual interface

- Select Private

- Give it a name

- Attach it to your account or another account (Hosted VIF) – in the context of VMware Cloud on AWS, it will actually be a hosted VIF

- Define the VLAN

- Peer IPs can be auto-generated (that’s the IP addresses of the BGP routers)

- Select the BGP AS Number

- BGP Key can be auto-generated

Once you have created the VIF, you will be able to download the BGP configuration from the AWS console and implement it on your on-prem router. Watch the session come up and traffic flowing across the Direct Connect!

Direct Connect with VMware Cloud on AWS

The way VMware and AWS integrated the Direct Connect into VMware Cloud on AWS is very neat and ridiculously intuitive. Not everything we’ve ever built is perfect but our engineering team has done a stellar job with this particular feature.

First thing to know is that if you already have a DX with AWS, you can use it with VMware Cloud on AWS. We use the existing pipe and build a logical BGP peering on top of it.

Here are the steps to deploy it:

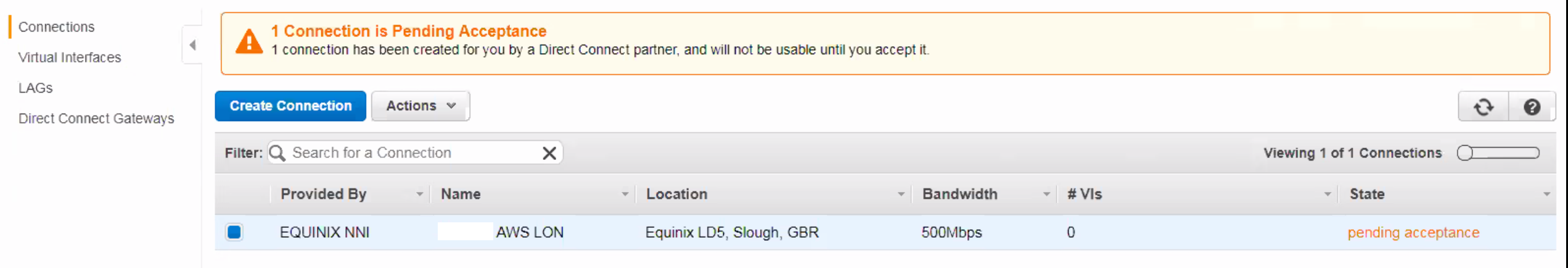

Request Direct Connect Circuit from AWS or an AWS Partner if you don’t have one already. Once that’s done, you will need to accept the Connection on the AWS console. On the screenshot below, I ordered a 500 Mbps connection from Equinix into the AWS London region.

Note this connection below is a “Hosted Connection” (as it’s less than a 1G). The “Hosted Connection” should be assigned to the customer-managed AWS account (not the shadow AWS account (which is the AWS Account ID displayed for the SDDC under Direct Connect tab)).

Once you accept it, the state will move from “pending acceptance” to “pending” and finally shortly move to “available”.

Once the Direct Connect Connection in the AWS console is available, create a Virtual Interface.

Another reminder from a previous paragraph – a hosted connection will only allow you a single VIF whereas a dedicated port will allow multiple VIFs.

- Select a Private VIF

- Give it a name

- Attach it to another account (Hosted VIF) – the VIF is not actually attached to your own AWS account but to the shadow AWS account we deployed the VMware Cloud on AWS SDDC in and that we manage on your behalf.

- Specify the shadow AWS account where your VMware Cloud on AWS SDDC is deployed. You can find the shadow AWS account on the VMC console, under “Networking & Security” / “System” / “Direct Connect”.

- Define the VLAN (might be already specified)

- Peer IPs can be auto-generated. I have seen some issues with the auto-generation option so I would recommend you specify the IP addresses on either side. You can for example use 169.254.255.1/30 and 169.254.255.2/30.

- Select the BGP AS Number. That would be the AS number on your side.

- BGP Key can be auto-generated

Once the VIF is created, select “Download Router Configuration” and see a sample further below.

On the VMware Cloud on AWS Console, you will need to accept the VIF and it will become attached to the SDDC.

Once the BGP configuration is set up on your on-prem router, you will see the BGP session coming up and routes being advertised and received.

In the example below, my on-prem networks (192.168.0.0/16, 10.32.0.0/11, 10.64.0.0/10 and 10.128.0.0/9) can now communicate with the VMC networks (10.33.1.0/26, 10.33.1.128/25, 192.168.1.0/24, 10.33.0.0/24 and 10.33.2.0/24) over a Direct Connect 500 Mbps circuit.

Here is another example when we receive the default route over DX. As I discuss in this other post, this means all the Internet-bound traffic from the VMC VMs will go over the DX.

In the video below, you can see what actually happens on the VMware Cloud on AWS console when 1) the VIF is attached to the VMware Cloud on AWS account, 2) when the BGP session is configured on the on-prem router.

Sample BGP config

You can stop reading here if routing is not your cup of tea. This goes a bit deeper and it is aimed at the network engineers would set up the BGP session on the on-prem router.

The below is an extract of the configuration automatically generated by AWS once the VIF is created. You can implement it on your BGP router (typically a Cisco or Juniper router but can also land on a firewall or an NSX edge).

I will explain in more details the settings in bold below.

! Amazon Web Services

!=======================================IPV4=======================================

! Direct Connect

! Virtual Interface ID: dxvif-XXXXXXX

!

! ------------------------------------------------------------------

! Interface Configuration

interface GigabitEthernet0/1.302

description "Direct Connect to your Amazon VPC or AWS Cloud"

encapsulation dot1Q 302

ip address 169.254.255.1 255.255.255.252

! ------------------------------------------------------------------

! Border Gateway Protocol (BGP) Configuration

!

! BGP is used to exchange prefixes between the Direct Connect Router and your

! Customer Gateway.

!

! If this is a Private Virtual Interface, your Customer Gateway may announce a default route (0.0.0.0/0),

! which can be done with the 'network' and 'default-originate' statements. To advertise other/additional prefixes,

! copy the 'network' statement and identify the prefix you wish to advertise. Make sure the prefix is present in the routing

! table of the device with a valid next-hop.

!

!

router bgp 64661

address-family ipv4

neighbor 169.254.255.2 remote-as 64512

neighbor 169.254.255.2 password s3cur3p$ssw0rd

network 0.0.0.0

! default-originate might be used instead to advertise a default route.

network 192.168.0.0 255.255.0.0

network 10.32.0.0 255.224.0.0

! etc...

Let’s rewind a bit:

- interface GigabitEthernet0/1.302 is the interface on your BGP router (with GigabitEthernet0/1 the physical interface and .302 the sub-interface

- 169.254.255.1 is the local IP address on the BGP router

- router bgp 64661 – defines the local AS in your DC (AS 64661 in our example)

- neighbor 169.254.255.2 remote-as 64512 – defines the remote peer (169.254.255.2) and remote BGP AS (64512) on the AWS side

- network 0.0.0.0 – would be used to advertised the default route from on-prem to VMC (to force Internet-bound traffic to go via an on-prem Internet pipe)

- network 192.168.0.0 255.255.0.0 – would be used to advertise the 192.168.0.0/16 range over the VMC SDDC

Hopefully this detailed blog post will help you understand the benefits of the Direct Connect and how it integrates with VMware Cloud on AWS.

If you want to read more, check out the following posts:

Hi,

Thanks for the information very informative.

If you have a “hosted connection” and so one VIF do i have the capability to configure vmotion between on-prem and VMC ?

Thanks

Chris

LikeLiked by 1 person

Hi Chris. Yes, you can! A live vMotion requires an extended network to maintain the same IP during the vMotion. We can extend a network through HCX or L2 VPN over the Direct Connect VIF (on a hosted connection or dedicated port) and then start vMotioning stuff back and forth across the DX.

LikeLike

Thanks again. What connectivity is required from a management perspective for vmotion is there still the VMkernal concepts and if so does the on-prem access them via the overlay.

LikeLike

Yes, you still need the VMkernel. If you use the NSX L2VPN to stretch networks for a vMotion, you need to follow the instructions here (https://docs.vmware.com/en/VMware-Cloud-on-AWS/services/com.vmware.vmc-aws.networking-security/GUID-058BFCAF-A3C6-4735-82C6-0C58A0572456_copy.html). Otherwise, if you need HCX to stretch networks for a vMotion, you would need to follow the instructions here (https://docs.vmware.com/en/VMware-NSX-Hybrid-Connect/3.5.1/user-guide/GUID-33509F90-794D-40D0-8BD4-2866F12E8516.html) to configure the HCX WAN Interconnect correctly to allow vMotion.

Final note:

– vMotion with NSX L2VPN is only possible over DX (not Internet).

– vMotion with HCX L2VPN is possible over DX or Internet.

LikeLike

Very useful info

LikeLike

hey Nico

If I leverage HCX IPSec VPN and L2VPN for management / vmotion / compute traffic, do we still require to setup the route VPN ? Or in deployment, we need Route VPN (or policy VPN) to give a connectivity between on-prem and SDDC “first” for management, HCX runs on top of this (or actually parallel) to provide the rest. Sorry I am a bit confuse on this part…

Barany

LikeLike