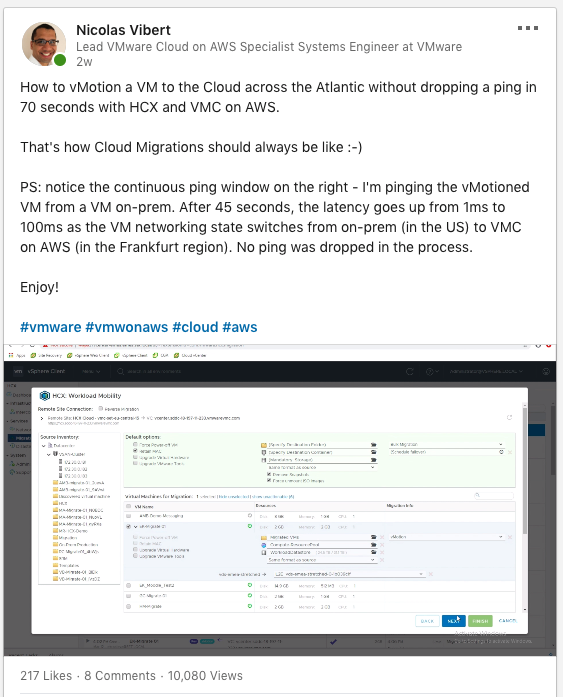

A few weeks ago, I posted a video on LinkedIn and received far more interaction than I expected:

It’s probably worth explaining the magic behind the scenes and walking through how I built this short demo.

A lot of the credit goes to Chris Lennon, Adam Bohle and Michael Armstrong for setting up our lab in the first place…

The full video is below:

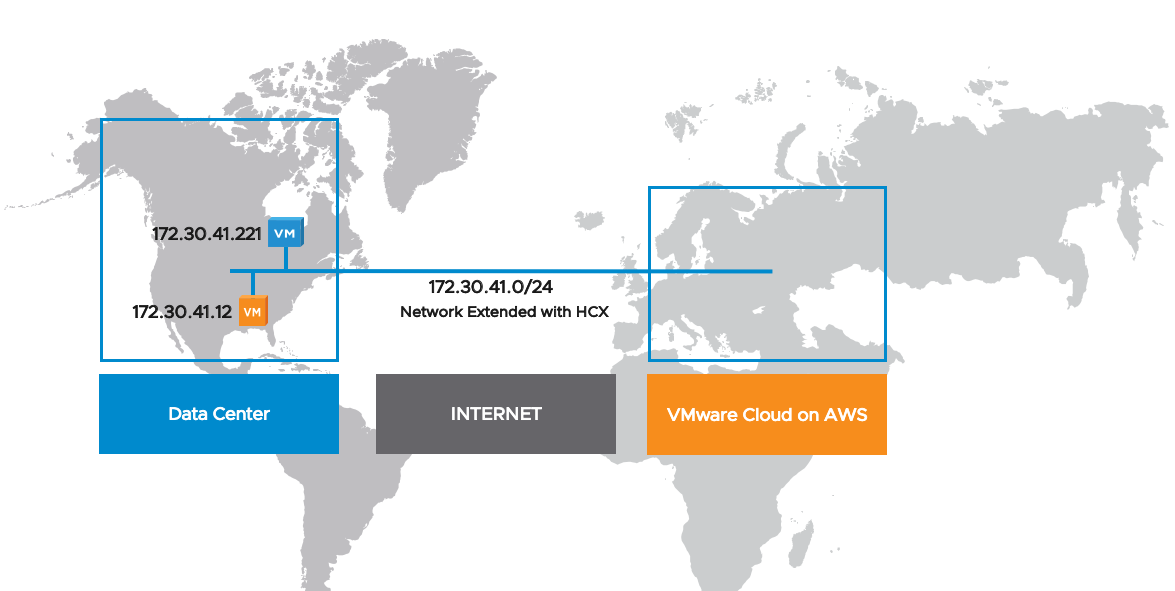

What is actually happening is a vMotion of the orange VM (below) over the Internet from our on-prem DC (which is actually a co-lo in Chicago) to our VMware Cloud on AWS SDDC in Frankfurt (Germany).

I will run a continuous ping from the blue VM to the orange VM.

For this, I am going to use the HCX tool, which comes included with VMware Cloud on AWS.

HCX can be a bit tricky to install so makes sure you have looked at the pre-requisites and have gone through the HCX feature walkthrough. That will save you time in the long run.

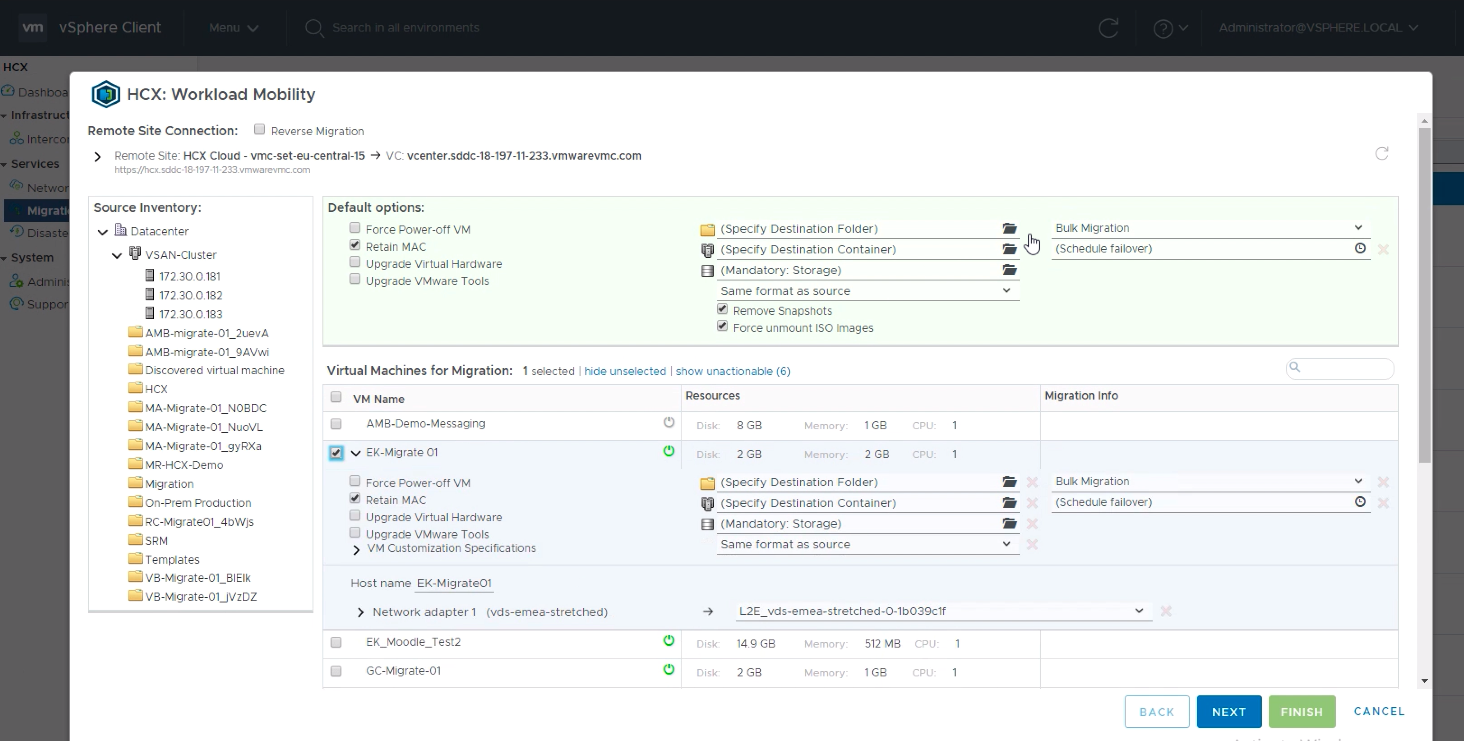

Before the recording had started, I had logged onto our on-prem vCenter and click on HCX and “Migration”. From this page, I can see my vCenter inventory and select the workloads I am going to move to the Cloud.

HCX migration is always driven from the ‘on-prem vCenter’. Even when we want to bring workloads back from the Cloud, it’s from the on-prem vCenter: just click on the “Reverse Migration” tickbox (just under the word Mobility at the top) and it will show you the inventory of Cloud workloads you might want to bring back on-prem.

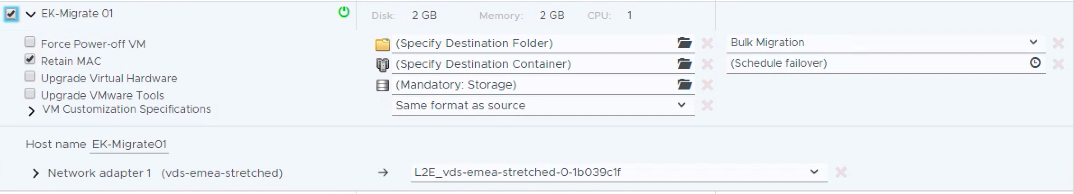

I selected a tiny Linux VM in my inventory (“EK-Migrate 01”). I could have selected an entire cluster/folder/vCenter but for the video, I just want to show a cross-Atlantic vMotion.

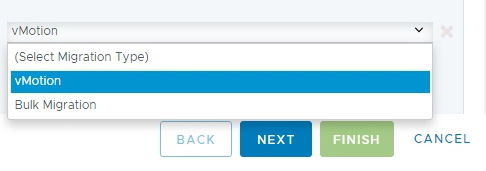

There are 4 methods supported to migrate with HCX – cold vMotion, live vMotion, Bulk Migration and Cloud Motion with vSphere Replication. Emad Younis explained it brilliantly in his blog so I won’t repeat it here. I select vMotion:

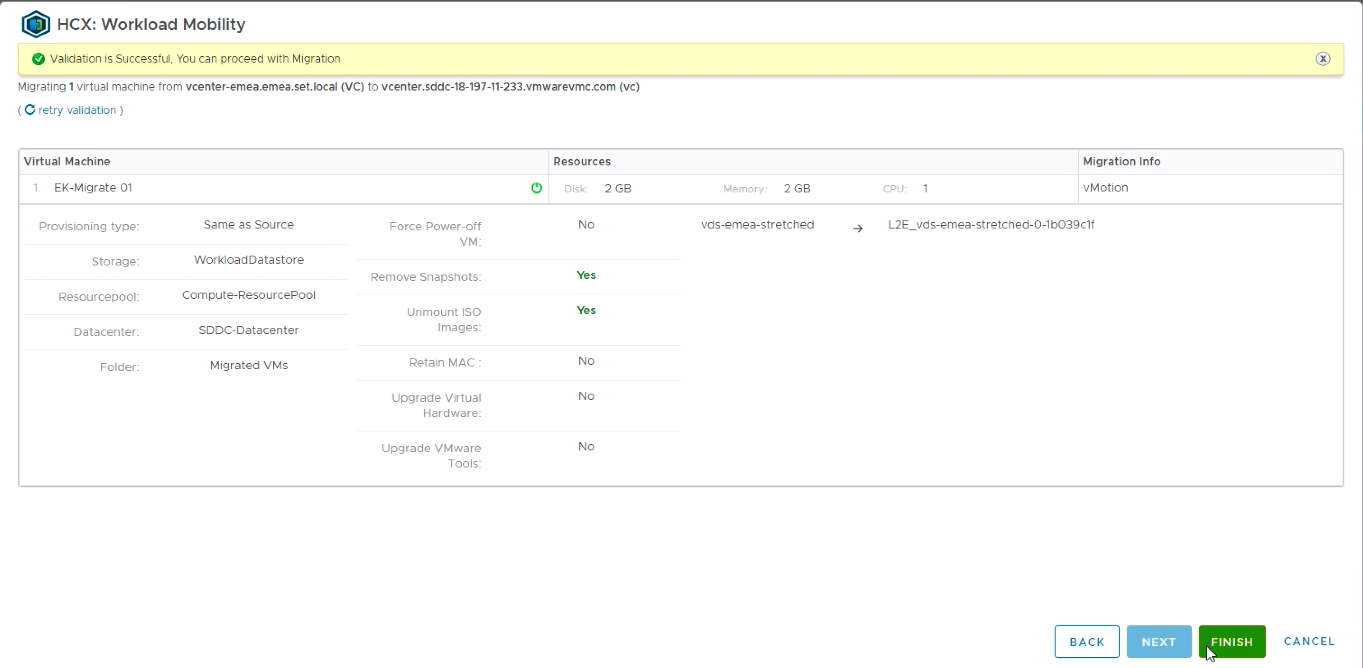

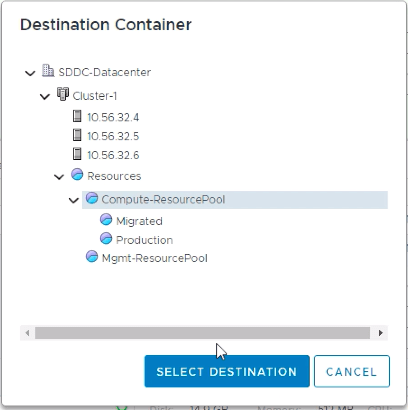

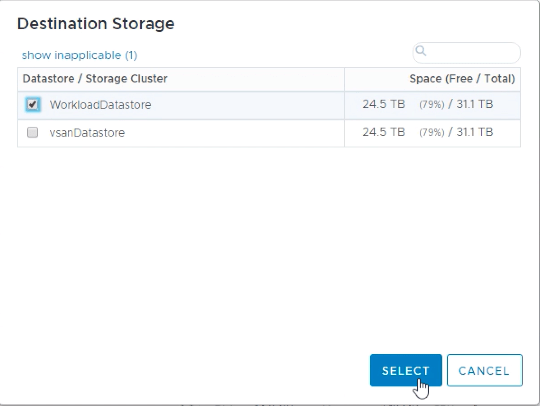

Then I need to decide where I am going to migrate my VMs. You need to select a resource pool (only the “Compute-ResourcePool” and its sub-pools are acceptable as a destination with VMware Cloud on AWS) and its destination storage (only the “WorkloadDataStore” is acceptable as a storage destination with VMware Cloud on AWS).

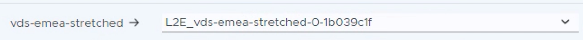

The VM will stay attached to the same network (this is obviously key for a live vMotion): during the installation and configuration of HCX, we had previously stretched the “vds-emea-stretched” network and the VM will move across to the Cloud slide on this network.

Once I press Next, there will be a brief compatibility check before I go ahead with the migration.

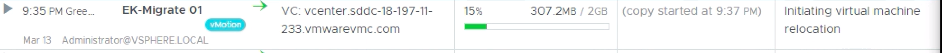

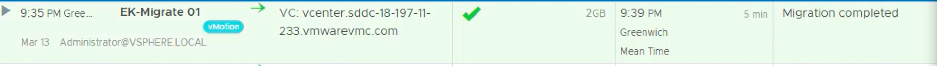

The vMotion starts at 9:35PM (don’t I have better things to do in the evening than recording this type of stuff – get a life, Nico!!).

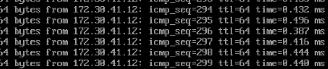

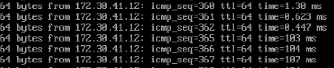

I am running a continuous ping from another VM in the same network (the blue VM on the diagram below on 172.30.41.221), which is attached to the same network and is on-prem. The ping response time is sub-millisecond.

After a few seconds, the networking component of the VM is switched across to its location in the Cloud and my ping is now from on-prem to the VM in the Cloud – unsurprisingly the response time goes beyond 100ms as we need to cross the Atlantic ocean and back. You can also see we only drop a couple of packets (#363 and #364) in the process.

By 9:39PM, the vMotion is completed.

And while all migrations won’t be as quick and straight-forward (it’s a tiny VM and obviously there are other considerations to have in mind before shuffling virtual machines across the globe), this post will have hopefully explained the art of the possible.

As a follow-up, I would recommend Ivan’s blog post – his perspective is worth reading.

Thanks for reading.

3 thoughts on “Anatomy of a Seamless Cross-Atlantic vMotion to VMware Cloud on AWS”