What I find fascinating with Kubernetes is that, despite its popularity, it is always changing and evolving. Large-scale adoption brings more complex requirements and challenges. But bringing new technical features isn’t always the most difficult challenge – the complexity is in adapting Kubernetes to fit its operational model.

That’s why the Kubernetes Gateway APIs were created.

Ingress API vs Kubernetes Gateway API

One of the first tasks to complete, once you’ve deployed your applications on your Kubernetes clusters, is to provide access to these applications. This is typically known as the “North-South” access into your cluster (with “East-West” within pods in your cluster).

The Ingress API has been the standard way to address this requirement. Ingress API is the common way to route traffic from your external load-balancing into your Kubernetes services.

The problem with the Ingress API is that it didn’t turn out to 1) provide the advanced load-balancing requirements users wanted to define and 2) was impractical for users to manage.

Tech vendors have largely addressed the lack of functionality by using annotations in order to extend the platform. But annotations end up creating inconsistency.

So the Special Interest Group for K8S networking started to work towards a new API to provide additional functionalities (for more details, read here) and a different consumption model. It’s interesting that an API is defined based on the role of the users that may use it.

One of the benefits of these new APIs is that the Ingress API is essentially split into separate functions – one to describe the Gateway and one for the Routes to the back-end services. By splitting these two functions, it gives operators the ability to change and swap gateways but keep the same routing configuration (if I had to compare it with something I know well – it’s as if you swap out a Cisco router for a Juniper one while keeping the same configuration).

Another benefit is simply based on the operational model. Platform owners might be the ones owning the deployment and the type of Gateways while developers and operators might be the ones updating the routes to the services they want to expose. So you can apply different levels of role based access control to these components.

Finally, the Gateway API adds supports for more sophisticated LB features like weighted traffic splitting (as we will see in the lab shortly) and HTTP header-based matching and manipulation.

As the Gateway API came out with K8S v1.21, many vendors started updating their platforms to support not just the Ingress APIs but also the new Gateway APIs. That includes my employer: HashiCorp released an update to Consul to support these new specifications, it gives me an excuse to test it out. Let’s check it out in the lab.

Lab Time!

Let’s get our hands on with the Consul API Gateway. I’m going to go through the Learn@HashiCorp tutorial. The guides on HashiCorp Learn are seriously excellent but they can sometimes skip some basics so I am going to provide as much context as I can.

The steps are:

- Deploy a k8s with kind

- Deploy Consul and the Consul API Gateway

- Deploy the sample applications that leverages that gateway

Let’s start with cloning the repo:

% git clone https://github.com/hashicorp/learn-consul-kubernetes.git

Cloning into 'learn-consul-kubernetes'...

remote: Enumerating objects: 1648, done.

remote: Counting objects: 100% (1648/1648), done.

remote: Compressing objects: 100% (1051/1051), done.

remote: Total 1648 (delta 818), reused 1289 (delta 541), pack-reused 0

Receiving objects: 100% (1648/1648), 606.72 KiB | 4.04 MiB/s, done.

Resolving deltas: 100% (818/818), done.

% cd learn-consul-kubernetes

% git checkout v0.0.12

Note: switching to 'v0.0.12'.

You are in 'detached HEAD' state. You can look around, make experimental

changes and commit them, and you can discard any commits you make in this

state without impacting any branches by switching back to a branch.

If you want to create a new branch to retain commits you create, you may

do so (now or later) by using -c with the switch command. Example:

git switch -c <new-branch-name>

Or undo this operation with:

git switch -

Turn off this advice by setting config variable advice.detachedHead to false

HEAD is now at 8df861b update README

% cd api-gateway/

Next, I’m going to use kind to build a Kubernetes cluster. It’s a change from EKS which I used in my eBPF posts. I surprisingly didn’t have kind yet on my laptop so I had to install it:

% kind create cluster --config=kind/cluster.yaml

zsh: command not found: kind

% brew install kind

Running `brew update --preinstall`...

==> Auto-updated Homebrew!

Updated 2 taps (hashicorp/tap and homebrew/core).

==> New Formulae

alpscore elixir-ls iodine linux-headers@5.16 spago vermin

ascii2binary elvis jless netmask sshs vkectl

asyncapi esphome juliaup numdiff tagref webp-pixbuf-loader

atlas fb303 kdoctor odo-dev tarlz websocketpp

bk fbthrift kopia oh-my-posh terminalimageviewer weggli

boost@1.76 fdroidcl kubescape pinot terraform-lsp xidel

brev ffmpeg@4 kyverno reshape textidote yamale

canfigger ghcup libadwaita roapi tfschema zk

cpptoml http-prompt librasterlite2 ruby@3.0 tidy-viewer

csview inotify-tools linode-cli rure usbutils

==> Updated Formulae

Updated 1513 formulae.

==> Renamed Formulae

annie -> lux

==> Deleted Formulae

carina go@1.10 go@1.11 go@1.12 go@1.9 gr-osmosdr hornetq path-extractor

==> Downloading https://ghcr.io/v2/homebrew/core/kind/manifests/0.11.1

######################################################################## 100.0%

==> Downloading https://ghcr.io/v2/homebrew/core/kind/blobs/sha256:116a1749c6aee8ad7282caf3a3d2616d11e6193c839c8797cde045cddd0e1138

==> Downloading from https://pkg-containers.githubusercontent.com/ghcr1/blobs/sha256:116a1749c6aee8ad7282caf3a3d2616d11e6193c839c8797cde0

######################################################################## 100.0%

==> Pouring kind--0.11.1.big_sur.bottle.tar.gz

==> Caveats

zsh completions have been installed to:

/usr/local/share/zsh/site-functions

==> Summary

🍺 /usr/local/Cellar/kind/0.11.1: 8 files, 8.4MB

OK, I am ready to build my local k8s cluster:

% kind create cluster --config=kind/cluster.yaml

Creating cluster "kind" ...

✓ Ensuring node image (kindest/node:v1.21.1) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-kind"

You can now use your cluster with:

kubectl cluster-info --context kind-kind

Have a question, bug, or feature request? Let us know! https://kind.sigs.k8s.io/#community 🙂

That was easy (and cheaper than using EKS or GKE…). Now, let’s install Consul via Helm to automatically configure the Consul and Kubernetes integration to run within an existing Kubernetes cluster.

% helm repo add hashicorp https://helm.releases.hashicorp.com

"hashicorp" already exists with the same configuration, skipping

%

% helm install --values consul/config.yaml consul hashicorp/consul --version "0.40.0"

Error: INSTALLATION FAILED: failed to download "hashicorp/consul" at version "0.40.0"

Unsurprisingly, I had already added the HashiCorp Helm chart (from doing a previous HashiCorp tutorial) but it was before the Consul API Gateway was live. I just had to do a Helm repo update to get started.

% helm repo update

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "hashicorp" chart repository

...Successfully got an update from the "cilium" chart repository

...Successfully got an update from the "grafana" chart repository

...Successfully got an update from the "prometheus-community" chart repository

Update Complete. ⎈Happy Helming!⎈

Right, let’s go and install Consul.

% helm install --values consul/config.yaml consul hashicorp/consul --version "0.40.0"

NAME: consul

LAST DEPLOYED: Tue Feb 22 15:56:56 2022

NAMESPACE: default

STATUS: deployed

REVISION: 1

NOTES:

Thank you for installing HashiCorp Consul!

Now that you have deployed Consul, you should look over the docs on using

Consul with Kubernetes available here:

https://www.consul.io/docs/platform/k8s/index.html

Your release is named consul.

To learn more about the release, run:

$ helm status consul

$ helm get all consul

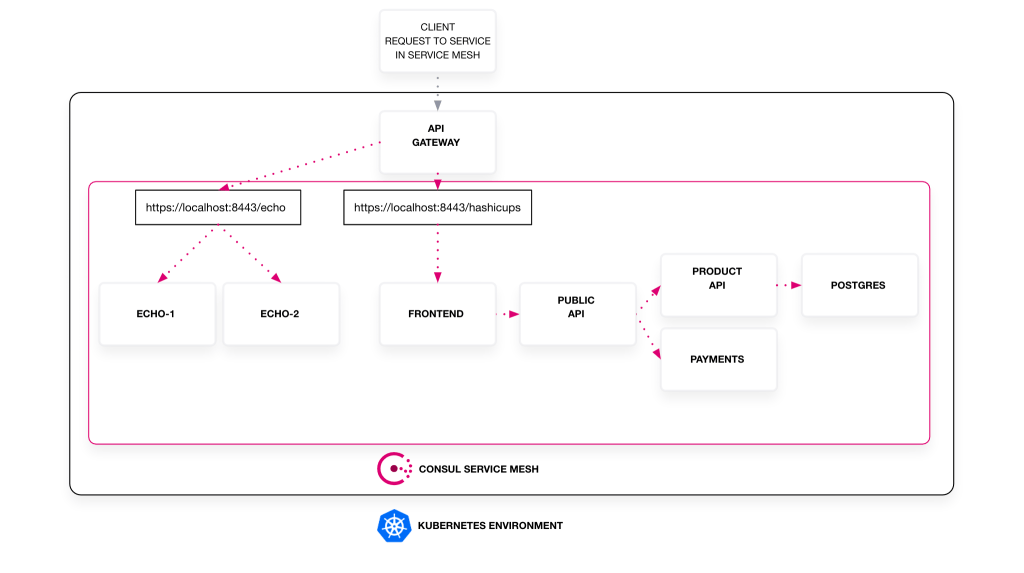

We’re now ready to deploy the sample applications. HashiCup is one of the standard HashiCorp demo apps and leverages micro-services and Consul Service Mesh to connect them all together:

Let’s go!

% kubectl apply --filename two-services/

servicedefaults.consul.hashicorp.com/echo-1 created

service/echo-1 created

deployment.apps/echo-1 created

servicedefaults.consul.hashicorp.com/echo-2 created

service/echo-2 created

deployment.apps/echo-2 created

servicedefaults.consul.hashicorp.com/frontend created

service/frontend created

serviceaccount/frontend created

configmap/nginx-configmap created

deployment.apps/frontend created

serviceintentions.consul.hashicorp.com/public-api created

serviceintentions.consul.hashicorp.com/postgres created

serviceintentions.consul.hashicorp.com/payments created

serviceintentions.consul.hashicorp.com/product-api created

service/payments created

serviceaccount/payments created

servicedefaults.consul.hashicorp.com/payments created

deployment.apps/payments created

service/postgres created

servicedefaults.consul.hashicorp.com/postgres created

serviceaccount/postgres created

deployment.apps/postgres created

service/product-api created

serviceaccount/product-api created

servicedefaults.consul.hashicorp.com/product-api created

configmap/db-configmap created

deployment.apps/product-api created

service/public-api created

serviceaccount/public-api created

servicedefaults.consul.hashicorp.com/public-api created

deployment.apps/public-api created

clusterrolebinding.rbac.authorization.k8s.io/consul-api-gateway-tokenreview-binding created

clusterrole.rbac.authorization.k8s.io/consul-api-gateway-auth created

clusterrolebinding.rbac.authorization.k8s.io/consul-api-gateway-auth-binding created

clusterrolebinding.rbac.authorization.k8s.io/consul-auth-binding created

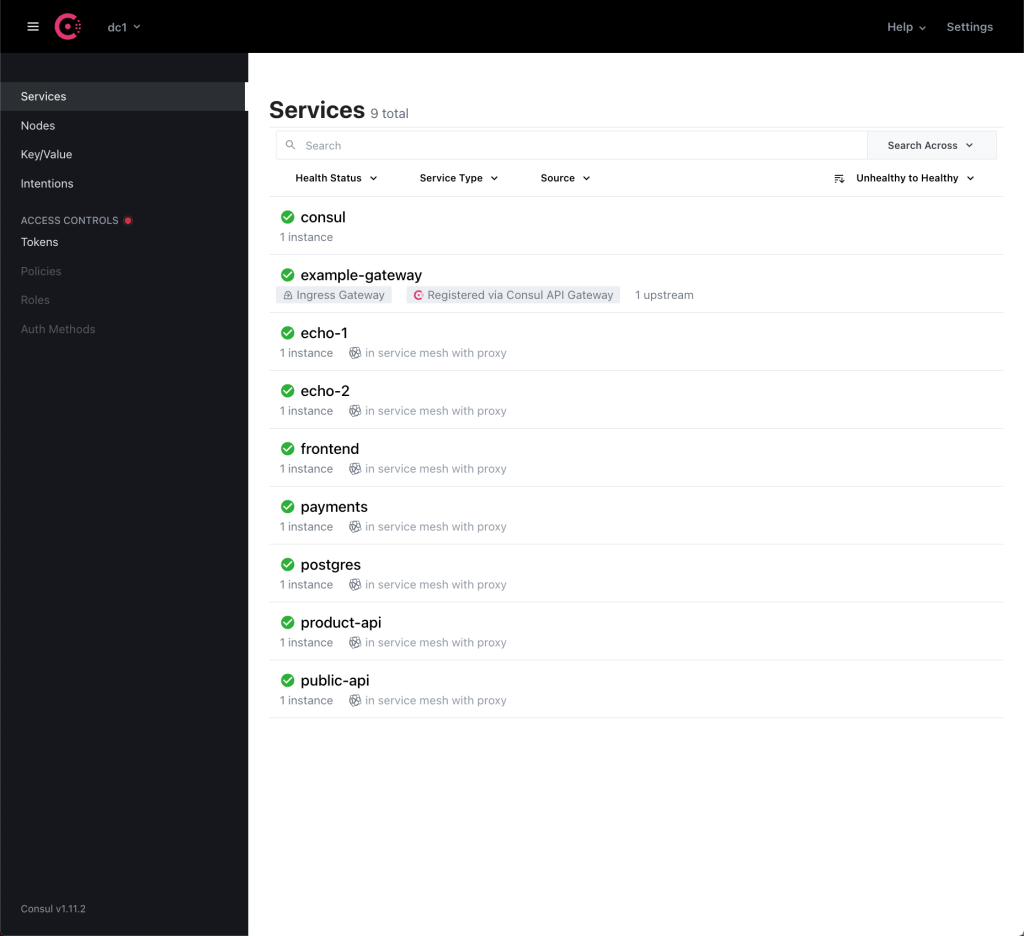

Everything’s working great:

% kubectl get pods

NAME READY STATUS RESTARTS AGE

consul-api-gateway-controller-5c46947749-8c24r 1/1 Running 1 95m

consul-client-rmjqs 1/1 Running 0 95m

consul-connect-injector-5c6557976b-dvj9q 1/1 Running 0 95m

consul-connect-injector-5c6557976b-mmcnj 1/1 Running 0 95m

consul-controller-84b676b9bb-plntb 1/1 Running 0 95m

consul-server-0 1/1 Running 0 95m

consul-webhook-cert-manager-8595bff784-22mmf 1/1 Running 0 95m

echo-1-79b597d656-476nl 2/2 Running 0 28m

echo-2-94b68d65b-z7rlz 2/2 Running 0 28m

frontend-5c54674c4-tgwbk 2/2 Running 0 28m

payments-77598ddb8f-bphzg 2/2 Running 0 28m

postgres-8479965456-s8mhj 2/2 Running 0 28m

product-api-dcf898744-zbfv9 2/2 Running 1 28m

public-api-7f67d79fb6-hpdh5 2/2 Running 0 28m

Let’s deploy our API Gateway:

% kubectl apply --filename api-gw/consul-api-gateway.yaml

gateway.gateway.networking.k8s.io/example-gateway created

Now, let’s take a look at this file we’ve just applied and let’s start digging into it:

---

apiVersion: gateway.networking.k8s.io/v1alpha2

kind: Gateway

metadata:

name: example-gateway

spec:

gatewayClassName: consul-api-gateway

listeners:

- protocol: HTTPS

port: 8443

name: https

allowedRoutes:

namespaces:

from: Same

tls:

certificateRefs:

- name: consul-server-cert

The Gateway above (appropriately named example-gateway) is actually derived from a GatewayClass (deployed earlier with Helm):

% kubectl describe GatewayClass

Name: consul-api-gateway

API Version: gateway.networking.k8s.io/v1alpha2

Kind: GatewayClass

Spec:

Controller Name: hashicorp.com/consul-api-gateway-controller

Parameters Ref:

Group: api-gateway.consul.hashicorp.com

Kind: GatewayClassConfig

Name: consul-api-gateway

Status:

Conditions:

Last Transition Time: 2022-02-22T15:58:32Z

Message: Accepted

Observed Generation: 1

Reason: Accepted

Status: True

Type: Accepted

Events: <none>

The GatewayClass is a type of Gateway that can be deployed: in other words, it is a template. This is done in a way to let infrastructure providers offer different types of Gateways. Users can then choose the Gateway they like.

Here, the GatewayClass is based on the Consul API Gateway.

And when I create a Gateway, it will be based on the parameters specified in the GatewayClass resource specifications.

One of these parameters is the GatewayClassConfig. That’s used to provide some Consul-specific details, like the API Gateway and Envoy images. We’re using Custom Resource Definitions (CRD) for most of this configuration.

% kubectl describe GatewayClassConfig

Name: consul-api-gateway

Namespace:

API Version: api-gateway.consul.hashicorp.com/v1alpha1

Kind: GatewayClassConfig

Spec:

Consul:

Authentication:

Ports:

Grpc: 8502

Http: 8501

Scheme: https

Copy Annotations:

Image:

Consul API Gateway: hashicorp/consul-api-gateway:0.1.0-beta

Envoy: envoyproxy/envoy-alpine:v1.20.1

Log Level: info

Service Type: NodePort

Use Host Ports: true

Events: <none>

Now we understand a bit better the Gateway configuration: it was based on the GatewayClass (and its GatewayClassConfig for specific Consul parameters).

If we look back at the spec:

spec:

gatewayClassName: consul-api-gateway

listeners:

- protocol: HTTPS

port: 8443

name: https

allowedRoutes:

namespaces:

from: Same

tls:

certificateRefs:

- name: consul-server-cert

It’s actually pretty clear: we are listening on port 8443 for HTTPS traffic (going back to the North/South requirement: that’s the traffic coming southbound into the cluster). The allowedRoutes is here to specify the namespaces from which Routes may be attached to this Listener. By default, it’s “Same” – ie we only use the namespace of the Gateway by default.

Interestingly, when we decide to use “All” instead of “Same” – to bind this gateway to routes in any namespace – it enables us to use a single gateway across multiple namespaces that may be managed by different teams. Again I would compare it my networking terms to MPLS or more specifically to VRF Lite.

It’s probably now a good time to look at these Routes – more precisely, these HTTPRoutes:

---

apiVersion: gateway.networking.k8s.io/v1alpha2

kind: HTTPRoute

metadata:

name: example-route-1

spec:

parentRefs:

- name: example-gateway

rules:

- matches:

- path:

type: PathPrefix

value: /echo

backendRefs:

- kind: Service

name: echo-1

port: 8080

weight: 50

- kind: Service

name: echo-2

port: 8090

weight: 50

---

apiVersion: gateway.networking.k8s.io/v1alpha2

kind: HTTPRoute

metadata:

name: example-route-2

spec:

parentRefs:

- name: example-gateway

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- kind: Service

name: frontend

port: 80

With all the sophistication around Kubernetes, well in the end we’re back to the ways of creating static routes 😀

This is essentially what the spec above does: specify the routing but also some load-balancing requirements:

- The routes are both attached to the “example-gateway” Gateway we built earlier.

- They have Rules to describe which traffic we’re going to send where. The PathPrefix type means we’re matching based on the URL path.

- If there’s “echo” in the path, we’ll send the traffic over to the echo services. Given the 50/50 weight, we’ll just load-balance the traffic between the two echo servers.

- For everything else, we’ll send the traffic over to the frontend service.

- When we actually go ahead and access the the load balancer over port 8443 (remember – that’s the port we are listening on), the traffic will be load-balanced between our two echo devices.

When I change the weights to 99 and 1 to introduce canary deployments and re-apply the routes, almost all the traffic goes to the echo-1 service as I expect.

apiVersion: gateway.networking.k8s.io/v1alpha2

kind: HTTPRoute

metadata:

name: example-route-1

spec:

parentRefs:

- name: example-gateway

rules:

- matches:

- path:

type: PathPrefix

value: /echo

backendRefs:

- kind: Service

name: echo-1

port: 8080

weight: 99

- kind: Service

name: echo-2

port: 8090

weight: 1

Let’s check it works by running a loop with “while”:

% while true; do curl -s -k "https://localhost:8443/echo" >> curlresponses.txt ;done

% more curlresponses.txt| grep -c "Hostname: echo-1"

1179

% more curlresponses.txt| grep -c "Hostname: echo-2"

9

What’s also cool is that we could specify different namespaces in the HTTPRoutes – therefore, for example, you could send the traffic to https://acme.com/payments to a namespace where the payment app is deployed and https://acme.com/ads to a namespace used by the ads team for their application.

Once the traffic enters the cluster from North to South, it would then, in most cases, need to go East-West across the Service Mesh cluster. Obviously here, that’s using the Consul Service Mesh functionality.

That’s it! In summary, the Gateway API addresses some of the deficiencies in the Ingress APIs and it’s clear most vendors in the API Gateway/Service Mesh/Load-Balancing world will adapt their platform to this new model.

Thanks for reading.

There must have been some breaking changes introduced as the initial consul helm install (using the “consul/values.yaml” now fails when trying to install via KIND.

Error: INSTALLATION FAILED: unable to build kubernetes objects from release manifest: [resource mapping not found for name: “consul-api-gateway” namespace: “” from “”: no matches for kind “GatewayClass” in version “gateway.networking.k8s.io/v1alpha2″ensure CRDs are installed first, resource mapping not found for name: “consul-api-gateway” namespace: “” from “”: no matches for kind “GatewayClassConfig” in version “api-gateway.consul.hashicorp.com/v1alpha1″ensure CRDs are installed first]

I have yet to find an installation that actually works on KIND in 2024

LikeLike

check out our Cilium gateway API labs on isovalent.com/labs – they all run on Kind

LikeLike